Training large language models more efficiently

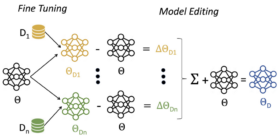

Training large language models more efficiently Training separate models on different datasets and then merging them reduces computational costs by as much as 91%. Conversational AI Dhananjay Ram Nikolaos Pappas March 27, 01:10 PM March 27, 01:10 PM Large language models (LLMs) go through several stages of training on mixed datasets with different distributions, stages that include pretraining, instruction tuning, and reinforcement learning from human feedback. Finding the optimal mix of data distributions across datasets is essential to […]