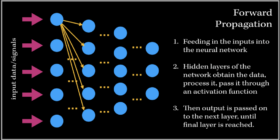

The Journey of Backpropagation in Neural Networks

I’ve always wondered as to what happens in the ‘backend’ of the training process in Neural Networks. The training process is essentially the ‘meat’ of the model; without efficient and effective training the model will not be able to accurately predict/classify or accomplish a task with newly unseen data. Neural Networks have always been held as a very useful system in prediction analysis, recommendation engines, modeling and a lot more; it is because has the ability to extract […]